- Unaligned Newsletter

- Posts

- AI from a Consumer Perspective

AI from a Consumer Perspective

Thank you to our Sponsor: Grow Max Value

AI from the consumer perspective is no longer a story about futuristic robots or abstract breakthroughs. It is a story about everyday choices, quiet tradeoffs, and the way small features change habits.

From the consumer point of view, the biggest question is not whether AI is powerful. People already know it is powerful. The biggest question is whether AI makes life easier without making life riskier. That is the consumer contract AI has to earn.

What consumers actually want from AI

Most people do not want “intelligence” in the abstract. They want time back, fewer hassles, and less cognitive load. They want outcomes that feel like a helpful assistant rather than a complicated new thing to manage.

A consumer-focused view of AI starts with a simple list of outcomes.

• Reduce friction in tasks people already do every day.

• Improve quality of results without requiring expertise.

• Personalize experiences without feeling intrusive.

• Increase safety and trust rather than create uncertainty.

• Keep the human in control, especially when stakes rise.

That seems obvious, but it leads to a critical point. Consumer AI fails when it is impressive but not dependable. Consumers will try a magical feature once. They will keep using it only if it works reliably and predictably.

The consumer era of AI is about products, not models

From the consumer perspective, AI is not a model. AI is a feature inside a product. That means consumer AI competes with every other feature on three axes.

• Speed, because people do not wait long for “smart” if it feels slow.

• Confidence, because people hate uncertainty disguised as certainty.

• Convenience, because any extra steps reduce adoption.

A consumer does not care whether the feature uses a transformer, a diffusion model, or something else. They care whether it solves a real problem and whether it creates new problems in the process.

The four consumer categories where AI is showing up

It helps to break consumer AI into four broad categories. Each category has its own risks, and each category suggests what consumers should watch for.

AI as creator

This includes text generation, image generation, video generation, and voice generation. The consumer appeal is obvious. Create faster. Create better. Create things you did not have the skill to create before.

• Draft messages, emails, and social posts.

• Generate images for invitations, ads, and presentations.

• Create short videos and memes.

• Produce voiceovers and audio edits.

The consumer risk is also obvious. When creation gets cheap, noise increases. Consumers have to spend more time evaluating what is real, what is manipulated, and what is low effort spam.

AI as organizer

This includes summarization, search, and personal information management.

• Summaries of long articles, meetings, and messages.

• Smart inboxes that highlight what matters.

• Search that answers questions rather than just returning links.

• Automatic to-do lists extracted from conversations.

The consumer value is huge because people are overwhelmed. The risk is that summaries can omit key details, and that “organizing” can quietly become “deciding,” where the system filters what someone sees.

AI as helper in commerce

This includes shopping assistants, travel planning, price comparisons, and customer support.

• Product comparison and selection help.

• Travel itineraries and booking suggestions.

• Returns and refunds handled by chatbots.

• Personalized offers and discounts.

The consumer risk is conflicts of interest. A shopping assistant might optimize for the retailer’s margins rather than the buyer’s value. Consumers need transparency about whether the assistant is working for them or for the seller.

AI as gatekeeper of reality

This includes content moderation, authenticity signals, and platform recommendation algorithms that now amplify synthetic content.

• Detection of synthetic content.

• Labels for AI generated media.

• Systems that downrank misleading content.

• Recommendation engines shaped by engagement.

The consumer risk is not only misinformation. It is confusion fatigue. If people cannot tell what is real, they disengage or they fall into cynicism. That changes culture, trust, and politics.

Thank you to our Sponsor: FlashLabs

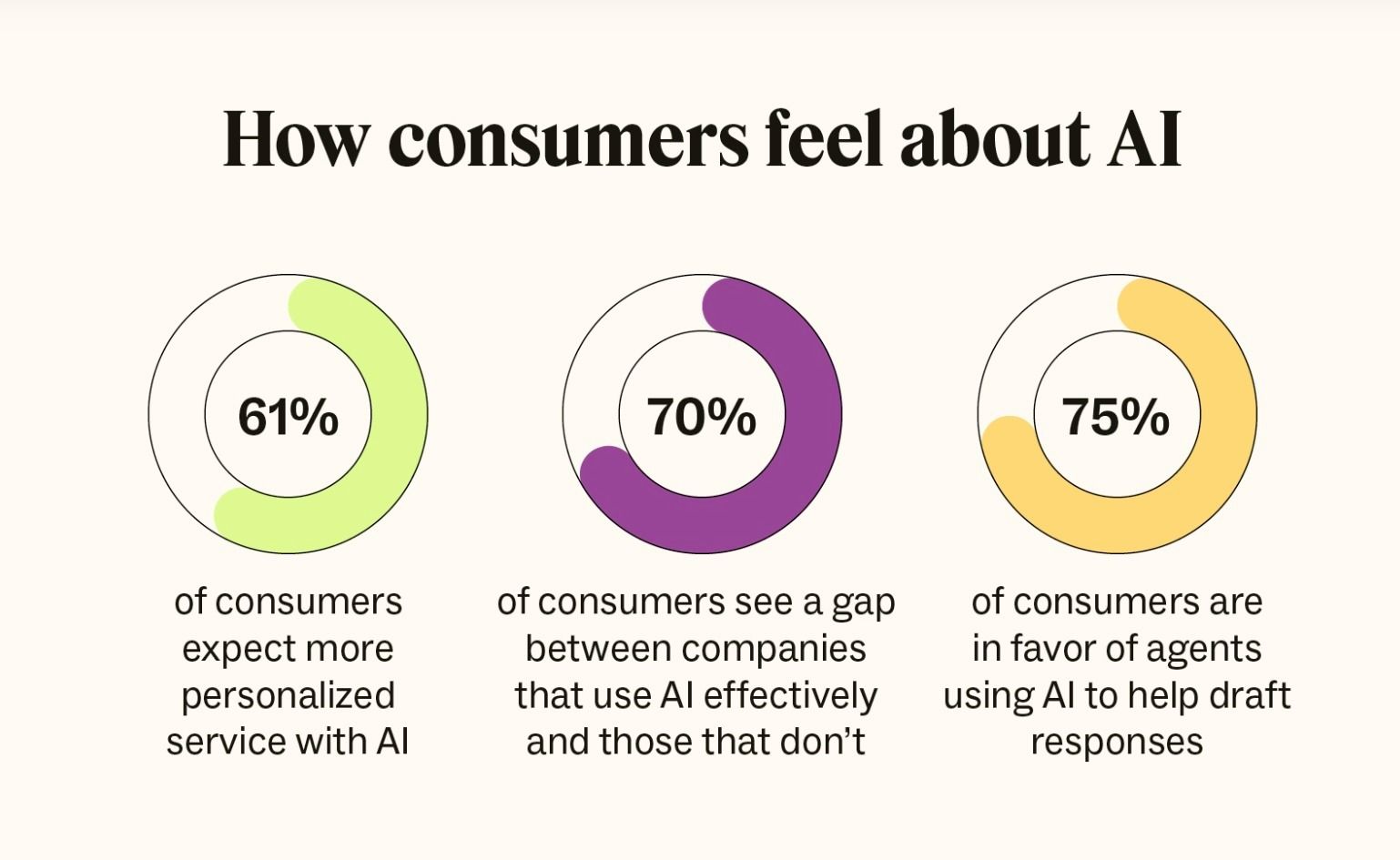

Source: Zendesk CX Trends Report 2026

Convenience versus control is the core consumer tradeoff

The most important consumer tradeoff in AI is the convenience control tradeoff. AI features feel best when they are automatic. But the more automatic they become, the more they can act in ways consumers did not intend.

Consumers will increasingly face moments where they have to decide.

• Do I want the AI to do this automatically, or only suggest it.

• Do I want the AI to store my preferences, or forget them after the task.

• Do I want the AI to connect to my accounts, or stay isolated.

• Do I want the AI to act on my behalf, or require approval.

This is why consumer AI is drifting toward agent features, where AI can take actions like booking, ordering, rescheduling, or messaging. That shift increases value and increases risk. Once words turn into actions, errors stop being harmless.

The trust problem consumers feel first

Consumers feel the trust problem earlier than enterprises do, because consumers encounter AI in emotionally sensitive contexts.

A parent sees a realistic fake video and worries.

A teenager sees a synthetic influencer and compares themselves to something unreal.

A customer gets stuck in automated support loops and feels powerless.

A job seeker receives a rejection email that sounds like a human but is not.

Trust issues show up as small emotional reactions that build into a broader skepticism. That skepticism can become the default, which is bad for consumers and bad for honest creators.

From a consumer perspective, trust is built with visible signals, not promises.

• Clear labeling of synthetic content.

• Clear disclosure when you are talking to an AI.

• Clear evidence and sources for factual claims.

• Simple ways to appeal or escalate to a human when needed.

• Predictable behavior that does not change randomly day to day.

Privacy is not a slogan, it is product design

Consumers do not want to read long policies. They want products that make privacy feel straightforward. AI makes this harder because the best personalization comes from more data and more memory. That is the tension.

Consumers should expect modern AI products to offer privacy controls that are not buried.

• A clear explanation of what is stored and for how long.

• The ability to delete history and memory easily.

• A setting that prevents training on personal content.

• On device processing where possible.

• Separate modes for sensitive tasks like health, finance, and minors.

Privacy is not only about data collection. It is also about inference. Even if an app does not store explicit details, it can infer preferences, habits, and vulnerabilities. Consumers will demand limits on both collection and inference, and regulators will increasingly focus there too.

Thank you to our Sponsor: EezyCollab

AI as a new form of customer service friction

One of the most immediate consumer impacts is how AI changes customer service. Sometimes it is great. It can resolve simple issues fast. Sometimes it is awful. It can block people from reaching a human, confuse edge cases, and create a feeling that the company is hiding behind automation.

Consumers should know the difference between a helpful automation and a deflection system.

• Helpful systems resolve common tasks quickly and offer easy escalation.

• Deflection systems trap users in scripted loops and hide the human option.

The best companies will treat AI support as a trust building channel, not as a cost cutting weapon. Consumers will remember the difference.

AI literacy becomes a consumer life skill

AI literacy does not mean learning how models work. It means learning how to interact with AI responsibly and how to spot its failure modes.

A practical consumer AI literacy list looks like this.

• Treat AI output as a draft, not a verdict, when stakes are high.

• Verify factual claims with trusted sources, especially health, finance, and legal claims.

• Be cautious with AI-generated media, especially content designed to trigger anger or fear.

• Do not share sensitive personal information unless you trust the product and understand retention.

• Use AI to generate options, then apply human judgment to choose.

This is not about fear. It is about using the tool well. The same way consumers learned to spot spam emails and scam calls, they will learn to spot synthetic persuasion.

It’s agents and that changes everything

The consumer AI story is shifting from “assist me” to “do it for me.” Agents book trips, manage returns, negotiate bills, and coordinate calendars. That is a big unlock, but it is also the moment when consumers demand stronger guardrails.

Consumers want agent features to include controls like these.

• Spend limits and explicit approvals for purchases.

• Transparent logs showing what the agent did and why.

• Permission boundaries that are easy to understand.

• The ability to pause, undo, and roll back.

• Strong protection from prompt injection and malicious content.

The consumer perspective is crucial here because consumers are the ones who will bear the cost of mistakes. A wrong flight booking, a mistaken cancellation, an accidental purchase, a message sent to the wrong person. These are not abstract harms. They are real.

From the consumer perspective, AI is entering daily life in the way electricity and smartphones did. Not as one big moment, but as a thousand small changes. The winners will not be the products that feel the most magical in a demo. The winners will be the products that are dependable, transparent, and respectful of the user.

Consumers will not judge AI by its IQ. They will judge it by whether it saves time, reduces stress, protects privacy, and makes reality feel more trustworthy rather than more confusing. AI that earns consumer trust will be the AI that is honest about uncertainty, clear about what it stores, safe about what it can do, and designed to keep the user in control.

Looking to sponsor our Newsletter and Scoble’s X audience?

By sponsoring our newsletter, your company gains exposure to a curated group of AI-focused subscribers which is an audience already engaged in the latest developments and opportunities within the industry. This creates a cost-effective and impactful way to grow awareness, build trust, and position your brand as a leader in AI.

Sponsorship packages include:

Dedicated ad placements in the Unaligned newsletter

Product highlights shared with Scoble’s 500,000+ X followers

Curated video features and exclusive content opportunities

Flexible formats for creative brand storytelling

📩 Interested? Contact [email protected], @samlevin on X, +1-415-827-3870

Just Three Things

According to Scoble and Cronin, the top three relevant and recent happenings

World Labs Raises $1B as Autodesk Backs 3D World Models

World Labs, founded by Fei Fei Li, raised a 1 billion dollar round that includes a 200 million dollar investment from Autodesk. The two companies plan to collaborate on integrating World Labs’ world models, including its Marble product for creating editable 3D environments from prompts, with Autodesk’s 3D design tools, starting with media and entertainment use cases. Autodesk says the partnership fits its broader push into spatial AI, including its own “neural CAD” efforts to generate functional 3D models that understand geometry and real world constraints, while both sides emphasize combining systems that understand geometry, physics, and dynamics rather than only text. TechCrunch

Dems Pull Back on AI Data Centers Ahead of 2028

Potential 2028 Democratic presidential contenders who recently competed to attract AI data centers with major tax incentives are now pulling back as public opposition grows. Voters are blaming power hungry data centers for higher electricity bills and worrying that AI will eliminate jobs, pushing governors like JB Pritzker, Josh Shapiro, and Wes Moore to propose pauses, tighter oversight, or stricter guidelines instead of blanket incentives. The shift signals that AI politics are turning from pro growth bidding wars to a more cautious posture focused on costs, regulation, and voter anxiety. Axios

Deepfake Decline Porn: How AI Videos Turn UK City Life Into Viral Outrage

AI generated fake videos depicting UK cities, especially Croydon, as filthy, dangerous, and “taxpayer funded” hubs of disorder are spreading fast on TikTok and Instagram, drawing millions of views and spawning copycat accounts. A creator called RadialB says the clips are meant to be funny but are designed to look real so people do not scroll past, and they often rely on “roadmen” stereotypes that can provoke racist and politically angry reactions from viewers who believe the scenes. The trend fits into “decline porn” content that portrays Western cities as collapsing because of crime and immigration, with cheap AI tools making it easier to manufacture convincing visuals that get reposted for engagement and monetisation. BBC

Scoble’s Top Five X Posts